New Brain-Computer Interface Converts Brain Signals Into Speech With up to 97% Accuracy

UC Davis Health has developed a groundbreaking brain-computer interface (BCI) that allows individuals with speech impairments, particularly those suffering from ALS, to communicate effectively.

This innovative system translates brain signals into speech with up to 97% accuracy, representing a significant breakthrough in neuroprosthetics. A clinical trial participant, Casey Harrell, who has ALS, successfully used the device to regain his ability to communicate. The technology, which uses microelectrode arrays implanted in the brain, has shown remarkable results in real-time speech decoding, offering hope and empowerment to those who have lost the ability to speak.

Revolutionary Brain-Computer Interface Enables Communication

A new brain-computer interface (BCI) developed at UC Davis Health translates brain signals into speech with up to 97% accuracy — the most accurate system of its kind.

The researchers implanted sensors in the brain of a man with severely impaired speech due to amyotrophic lateral sclerosis (ALS). The man was able to communicate his intended speech within minutes of activating the system.

A study about this work was published today (August 14) in the New England Journal of Medicine.

Understanding ALS and Its Impact on Speech

ALS, also known as Lou Gehrig’s disease, affects the nerve cells that control movement throughout the body. The disease leads to a gradual loss of the ability to stand, walk, and use one’s hands. It can also cause a person to lose control of the muscles used to speak, leading to a loss of understandable speech.

Cutting-Edge Technology Restores Communication

The new technology is being developed to restore communication for people who can’t speak due to paralysis or neurological conditions like ALS. It can interpret brain signals when the user tries to speak and turns them into text that is ‘spoken’ aloud by the computer.

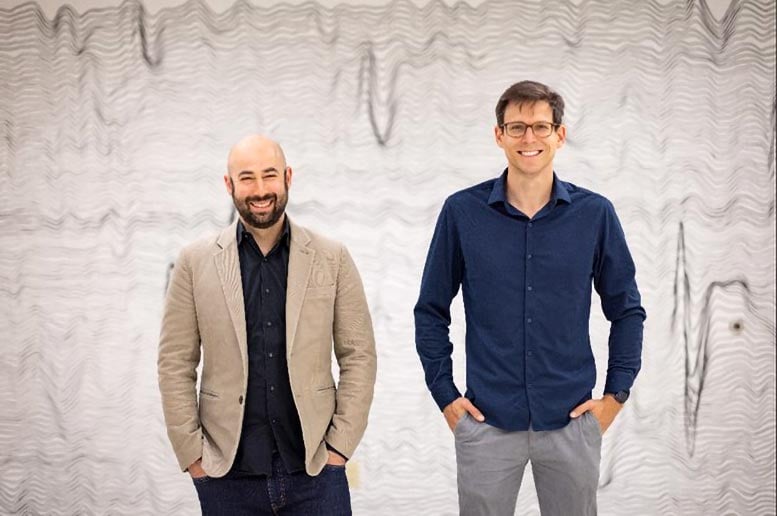

“Our BCI technology helped a man with paralysis to communicate with friends, families, and caregivers,” said UC Davis neurosurgeon David Brandman. “Our paper demonstrates the most accurate speech neuroprosthesis (device) ever reported.”

Brandman is the co-principal investigator and co-senior author of this study. He is an assistant professor in the UC Davis Department of Neurological Surgery and co-director of the UC Davis Neuroprosthetics Lab.

How the BCI Device Works

When someone tries to speak, the new BCI device transforms their brain activity into text on a computer screen. The computer can then read the text out loud.

To develop the system, the team enrolled Casey Harrell, a 45-year-old man with ALS, in the BrainGate clinical trial. At the time of his enrollment, Harrell had weakness in his arms and legs (tetraparesis). His speech was very hard to understand (dysarthria) and required others to help interpret for him.

In July 2023, Brandman implanted the investigational BCI device. He placed four microelectrode arrays into the left precentral gyrus, a brain region responsible for coordinating speech. The arrays are designed to record the brain activity from 256 cortical electrodes.

“We’re really detecting their attempt to move their muscles and talk,” explained neuroscientist Sergey Stavisky. Stavisky is an assistant professor in the Department of Neurological Surgery. He is the co-director of the UC Davis Neuroprosthetics Lab and co-principal investigator of the study. “We are recording from the part of the brain that’s trying to send these commands to the muscles. And we are basically listening into that, and we’re translating those patterns of brain activity into a phoneme — like a syllable or the unit of speech — and then the words they’re trying to say.”

Faster Training, Better Results

Despite recent advances in BCI technology, efforts to enable communication have been slow and prone to errors. This is because the machine-learning programs that interpreted brain signals required a large amount of time and data to perform.

“Previous speech BCI systems had frequent word errors. This made it difficult for the user to be understood consistently and was a barrier to communication,” Brandman explained. “Our objective was to develop a system that empowered someone to be understood whenever they wanted to speak.”

Real-Time Speech Decoding: A Milestone Achievement

Harrell used the system in both prompted and spontaneous conversational settings. In both cases, speech decoding happened in real-time, with continuous system updates to keep it working accurately.

The decoded words were shown on a screen. Amazingly, they were read aloud in a voice that sounded like Harrell’s before he had ALS. The voice was composed using software trained with existing audio samples of his pre-ALS voice.

At the first speech data training session, the system took 30 minutes to achieve 99.6% word accuracy with a 50-word vocabulary.

“The first time we tried the system, he cried with joy as the words he was trying to say correctly appeared on-screen. We all did,” Stavisky said.

In the second session, the size of the potential vocabulary increased to 125,000 words. With just an additional 1.4 hours of training data, the BCI achieved a 90.2% word accuracy with this greatly expanded vocabulary. After continued data collection, the BCI has maintained 97.5% accuracy.

Transformative Impact on Communication for ALS Patients

“At this point, we can decode what Casey is trying to say correctly about 97% of the time, which is better than many commercially available smartphone applications that try to interpret a person’s voice,” Brandman said. “This technology is transformative because it provides hope for people who want to speak but can’t. I hope that technology like this speech BCI will help future patients speak with their family and friends.”

The study reports on 84 data collection sessions over 32 weeks. In total, Harrell used the speech BCI in self-paced conversations for over 248 hours to communicate in person and over video chat.

Restoring Dignity Through Communication

“Not being able to communicate is so frustrating and demoralizing. It is like you are trapped,” Harrell said. “Something like this technology will help people back into life and society.”

“It has been immensely rewarding to see Casey regain his ability to speak with his family and friends through this technology,” said the study’s lead author, Nicholas Card. Card is a postdoctoral scholar in the UC Davis Department of Neurological Surgery.

“Casey and our other BrainGate participants are truly extraordinary. They deserve tremendous credit for joining these early clinical trials. They do this not because they’re hoping to gain any personal benefit, but to help us develop a system that will restore communication and mobility for other people with paralysis,” said co-author and BrainGate trial sponsor-investigator Leigh Hochberg. Hochberg is a neurologist and neuroscientist at Massachusetts General Hospital, Brown University, and the VA Providence Healthcare System.

Reference: “An Accurate and Rapidly Calibrating Speech Neuroprosthesis?” 14 August 2024, New England Journal of Medicine.

DOI: 10.1056/NEJMoa2314132

Funding: Office of the Assistant Secretary of Defense for Health Affairs, NIH/National Institute on Deafness and Other Communication Disorders, A.P. Giannini Foundation, the Simons Collaboration for the Global Brain, The Searle Scholar Program, Burroughs Wellcome Fund, University of California Davis School of Medicine, Department of Veterans’ Affairs, Howard Hughes Medical Institute, Wu Tsai Neurosciences Institute

Source link